This website uses cookies. By continuing to browse the site, you are agreeing to our use of cookies

Introduction to Convolutional Neural Networks

Digital & Software Solutions

January 20, 2025

Convolutional Neural Networks (CNNs) are a type of artificial neural network that revolutionized the field of computer vision. They are designed to process and analyze images and have become essential for tasks like image classification, object detection, and even video analysis. Let’s dive into CNNs in a simple, easy-to-understand way.

What is a Neural Network?

Before understanding CNNs, it’s important to grasp the basics of a neural network. A neural network is inspired by how our brain works. It has layers of “neurons” that process and learn patterns from data. Each layer takes input from the previous one, processes it, and passes the output to the next layer.

Why Convolutional Neural Networks?

If we use regular neural networks such as ANN for images, then it will be computationally intensive. Suppose you have a two-dimensional image of 100*100, then the total number of features required will be 10,000. And if we have 100 neurons in the first layer, then we will require 10000*100 which is equivalent to 1Million parameters just for first layer. Since large parameters are required to process ANN, it will provide an overfitted model.

Processing all that information directly becomes inefficient. CNNs have a lot of filters in order to extract features and solve this inefficiency problem by focusing on smaller portions of the image at a time, making them much better at handling image data.

What is a Convolutional Neural Network: Key Components of CNNs

CNNs have a few key components that make them powerful:

- Convolution Layer: This is the heart of CNN. It looks at small parts of the image (called a filter or kernel) and extracts important features, like edges, textures, or shapes. The same filter is applied across the image, which helps capture the essential features no matter where they appear.

- Pooling Layer: After the convolution layer, we use pooling to reduce the size of the image and the number of computations needed. This layer picks the most important information by either selecting the maximum value (max pooling) or averaging values (average pooling) in small sections of the image.

- Fully Connected Layer: This layer takes the features identified by previous a convolutional neural network and makes a final prediction. It’s like the decision-making part of the network that identifies the object in the image (e.g., a cat, dog, car, etc.).

How CNNs Work (A Step-by-step Convolutional Neural Network Example ) :

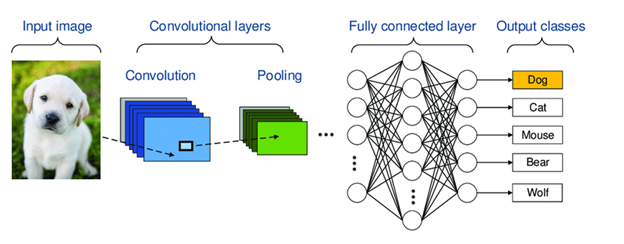

Figure 1. Demonstration of CNN

- Input Image: The network takes an image as input.

- Convolution: The convolution layer applies multiple filters to the image, highlighting important features like edges, curves, or textures.

- Pooling: The pooling layer reduces the size of the image while keeping important information.

- More Convolution + Pooling: Several convolution and pooling layers are stacked to learn more complex features as the network goes deeper.

- Flattening: The final image is “flattened” into a 1D vector (a list of numbers) that the fully connected layer can understand.

- Prediction/ Output classes: The fully connected layer analyzes the features and makes a prediction (e.g., is this a cat or a dog?).

Why CNNs Are Powerful

- Feature: CNNs automatically learn important features from images, such as edges, textures, and shapes, without needing to be explicitly programmed.

- Translation Invariance: CNNs can detect objects in different positions within the image, making them more robust to variations in input.

- Reduced Complexity: By focusing on small parts of the image, CNNs reduce the number of computations compared to traditional neural networks.

Applications of CNNs

CNNs are widely used in various fields:

- Image Classification: A convolutional neural network for image classification is adept at recognizing objects in images (e.g., cats, dogs, cars).

- Object Detection: CNNs are skilled at identifying and locating multiple objects in an image.

- Facial Recognition: Convolutional neural networks for facial recognition involves identifying faces in photos.

- Medical Imaging: CNNs can be used for detecting diseases in medical scans like MRIs or X-rays.

- Self-Driving Cars: Processing camera data to recognize pedestrians, traffic signs, and other vehicles are one way that CNNs aid in the technology behind self-driving cars.

- Image Processing: Image processing using CNN involves enhancing or manipulating images through techniques like denoising, super-resolution, and style transfer.

Customer Case study:

A global insurance leader faced challenges processing diverse policy documents like Open Market MRCs and Binders, containing structured, semi-structured, and unstructured data. Manual efforts to extract and classify critical details—such as risk structures, policy types, and contract information—were time-intensive and error-prone. Additionally, transforming these insights into JSON API messages for integration with the Eclipse underwriting platform added complexity. The client needed an automated, scalable solution to streamline document processing, enhance accuracy, and reduce manual intervention.

Hexaware’s AI-powered solution addressed these challenges using ML-based OCR (EasyOCR) and Graph Convolutional Neural Networks (GCNs). OCR extracted text from both digital and scanned PDFs, while GCNs classified key document elements by modeling relationships between data sections as graphs. Extracted insights were transformed into structured JSON messages, enabling seamless integration with Eclipse. This approach delivered over 90% accuracy, reduced manual effort by 75%, and significantly improved scalability, allowing the client to process large volumes of documents efficiently. Hexaware’s solution not only enhanced operational efficiency but also laid the groundwork for future AI-driven innovations in insurance policy management.

Conclusion

Convolutional Neural Networks (CNNs) are a powerful tool for image processing tasks. By breaking down an image into smaller pieces, learning important features, and making accurate predictions, CNNs have become a core technology in fields like computer vision, healthcare, and even autonomous driving. Their ability to “see” and understand images has transformed how we approach real-world challenges.

Whether you’re identifying objects in photos or diagnosing medical conditions, CNNs are at the heart of many technological advancements today!

Interested in chatting more about this topic? Let’s talk

About the Author

Zeba Shaikh

Generative AI Solution Architect

Zeba Shaikh is a Generative AI Solution Architect at Hexaware, with extensive expertise in Artificial Intelligence, Machine Learning, and Cloud Prototyping. She leverages her skills in analytics, data science, and deep learning frameworks like TensorFlow and PyTorch to drive innovative solutions across diverse industries. Recently, Zeba has been focusing on prototyping generative AI models on AWS and GCP to deliver cutting-edge advancements for business success.

Read more

About the Author

Khushal Pachory

Generative AI Lead

Khushal Pachory is a Generative AI Lead at Hexaware, passionate about leveraging artificial intelligence to drive innovation and transformative solutions. With expertise in machine learning, natural language processing, and computer vision, he specializes in developing generative models and delivering comprehensive training in AI, Python, and data science. Khushal's commitment to staying at the forefront of AI research ensures he provides cutting-edge solutions and empowers others to harness the potential of emerging technologies.

Read more

Related Blogs

The Role of Design Thinking in Digital Product Consulting

- Digital & Software Solutions

What is Platform Engineering? From Chaos to Control in Software Development

- Digital & Software Solutions

Top Tips for Success in Working with Digital Product Design and Development Services Companies

- Digital & Software Solutions

Legacy Application Modernization for Enterprise IT

- Digital & Software Solutions

Top 10 Legacy Modernization Companies Powering Enterprise Transformation

- Digital & Software Solutions

What Is Generative AI Consulting?

- Generative AI

- Digital & Software Solutions

The Evolution of Digital Product Development

- Digital & Software Solutions

Rapid Prototyping for Enterprise Software Development

- Digital & Software Solutions

Shaping Tomorrow: Top 12 Digital Engineering Services Providers to Watch

- Digital & Software Solutions

Digital Transformation Services: A Complete Guide for CTOs at Enterprise Scale

- Digital & Software Solutions

Ready to Pursue Opportunity?

Every outcome starts with a conversation